jina-embeddings-v5-omni-small: Multi-Task Omni Embedding Base (Small)

Model Overview

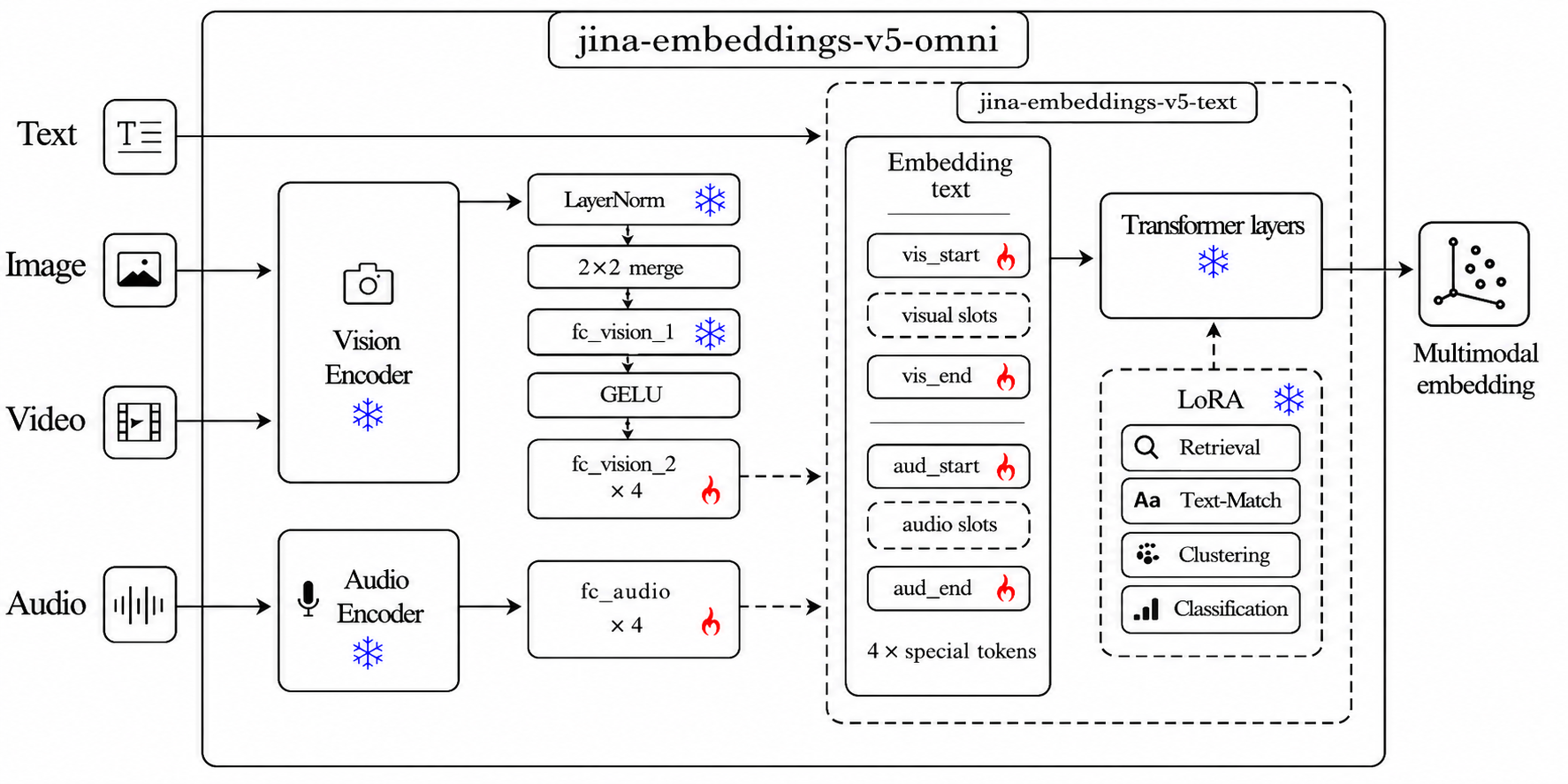

jina-embeddings-v5-omni-small is a multimodal embedding model that accepts text, images, video, and audio and produces embeddings in a shared vector space aligned with the text-only jinaai/jina-embeddings-v5-text-small — so you can index with text and query with any modality, no reindexing.

This is the base repository — it holds all task adapters (retrieval, classification, clustering, text-matching). For a single task, pre-merged task-specific variants are also available:

jinaai/jina-embeddings-v5-omni-small-classificationjinaai/jina-embeddings-v5-omni-small-clusteringjinaai/jina-embeddings-v5-omni-small-retrievaljinaai/jina-embeddings-v5-omni-small-text-matching

| Feature | Value |

|---|---|

| Parameters | ~1.74B |

| Embedding Dimension | 1024 |

| Supported Tasks | retrieval, classification, clustering, text-matching |

| Max Sequence Length | 32768 |

| Pooling Strategy | Last-token |

| Supported Inputs | text, image, video, audio |

| Supported File Types | images: .jpg, .jpeg, .png, .gif, .webp, .bmp, .tif, .tiff, .avif, .heic, .svg; video: .mp4, .avi, .mov, .mkv, .webm, .flv, .wmv; audio: .wav, .mp3, .flac, .ogg, .m4a, .opus; documents: .pdf |

| Matryoshka Dimensions | 32, 64, 128, 256, 512, 768, 1024 |

Install

# core

pip install transformers torch pillow numpy

# Optional — install only the extras for the modalities you actually use:

pip install librosa soundfile # audio decoding

pip install av imageio # video decoding (pure-Python, no codec daemon)

pip install pdf2image pypdfium2 # PDF rendering

pip install cairosvg pillow # SVG rendering

pip install "vllm==0.20.1" # high-throughput serving (validated)

pip install sentence-transformers # one-call multimodal API

For minimum versions see the Requirements section below (transformers >= 4.57, torch >= 2.5; vLLM path validated with vllm == 0.20.1).

Quickstart

from PIL import Image

import librosa, torch

from transformers import AutoModel, AutoProcessor, WhisperFeatureExtractor

repo = "jinaai/jina-embeddings-v5-omni-small"

model = AutoModel.from_pretrained(repo, trust_remote_code=True, default_task="retrieval").eval()

proc = AutoProcessor.from_pretrained(repo, trust_remote_code=True)

# model.embed(**inputs) returns L2-normalized last-token embeddings.

t_vec = model.embed(**proc(text="Query: Which planet is known as the Red Planet?", return_tensors="pt").to(model.device))

i_vec = model.embed(**proc(images=Image.open("photo.jpg"), text="<|vision_start|><|image_pad|><|vision_end|>", return_tensors="pt").to(model.device))

v_vec = model.embed(**proc(videos="clip.mp4", text="<|vision_start|><|video_pad|><|vision_end|>", return_tensors="pt").to(model.device))

# Audio has no string placeholder — build token ids from config.

audio, _ = librosa.load("speech.wav", sr=16000)

feat = WhisperFeatureExtractor(feature_size=128)(audio, sampling_rate=16000, return_tensors="pt")["input_features"]

cfg = model.config

n = feat.shape[-1] // 4

ids = torch.tensor([[cfg.audio_start_token_id, *[cfg.audio_token_id]*n, cfg.audio_end_token_id]])

a_vec = model.embed(

input_ids=ids.to(model.device),

attention_mask=torch.ones_like(ids).to(model.device),

input_features=feat.to(model.device, dtype=next(model.parameters()).dtype),

)

For retrieval, use encode_query() for query-side embeddings and encode_document() for document-side embeddings. A bare encode(text) call does not know which retrieval side you intended.

No dtype, device, min_pixels, or custom pooling code needed — sensible defaults live in the model config (bf16 weights, 256–1280 vision tokens).

Requirements

transformers>=4.57(recommend >=5.1 for the small variants)torch>=2.5

Optional:

sentence-transformers— one-call API for all 4 modalitieslibrosa— audio decodingav— video decoding (pip install av)vllm==0.20.1— high-throughput serving; H100 deployments may also need DeepGEMM installed for vLLM FP8 kernels

Selective Modality Loading

By default all components (vision + audio towers + text encoder) are loaded. To save memory, pick a subset — the unused towers are skipped at load time:

from transformers import AutoModel

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-small", trust_remote_code=True, modality="omni") # all (default)

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-small", trust_remote_code=True, modality="vision") # vision + text

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-small", trust_remote_code=True, modality="audio") # audio + text

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-small", trust_remote_code=True, modality="text") # text only

Same parameter works via sentence-transformers:

SentenceTransformer("jinaai/jina-embeddings-v5-omni-small", trust_remote_code=True, model_kwargs={"modality": "vision"})

Via sentence-transformers

from sentence_transformers import SentenceTransformer

# Base repo holds all 4 task adapters — pick one at load time.

model = SentenceTransformer(

"jinaai/jina-embeddings-v5-omni-small",

trust_remote_code=True,

model_kwargs={"default_task": "retrieval"},

)

# URLs, local paths (with or without extension), PIL.Image, np.ndarray,

# torch.Tensor, bytes, and BytesIO are all accepted directly.

q_vec = model.encode_query("Which planet is known as the Red Planet?")

d_vec = model.encode_document("Mars is often referred to as the Red Planet due to its reddish appearance.")

i_vec = model.encode("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/car.jpg")

v_vec = model.encode("https://huggingface.co/datasets/raushan-testing-hf/videos-test/resolve/main/sample_demo_1.mp4") # needs `pip install av`

a_vec = model.encode("https://huggingface.co/datasets/Narsil/asr_dummy/resolve/main/mlk.flac") # needs `pip install librosa soundfile`

# Fused multimodal — a tuple becomes ONE embedding in a single forward pass:

emb = model.encode(("Winter boots, waterproof leather upper",

"https://.../boot.jpg",

"https://.../boot.mp4"))

For non-retrieval tasks (classification / clustering / text-matching), reload

with the corresponding default_task — no prompt_name needed.

No dtype, device, min_pixels, or custom pooling code needed — sensible defaults live in the model config (bf16 weights, 256–1280 vision tokens).

Accepted video inputs

Path (.mp4 .avi .mov .mkv .webm .flv .wmv, or extensionless — content-sniffed), HTTP(S) URL, bytes/io.BytesIO, and in-memory np.ndarray / torch.Tensor of shape (T, H, W, 3|4) with dtype uint8. In-memory arrays are encoded to MP4 on the fly (needs pip install imageio imageio-ffmpeg).

import numpy as np

# (T, H, W, 3) uint8 — e.g. from decord, imageio, or an rgb frame buffer

frames = np.zeros((16, 224, 224, 3), dtype=np.uint8)

v_vec = model.encode(frames)

Via vLLM

The base repo holds all 4 task adapters. Pick one task per vLLM instance via hf_overrides:

from vllm import LLM

llm = LLM(

model="jinaai/jina-embeddings-v5-omni-small",

runner="pooling",

trust_remote_code=True,

hf_overrides={"task": "retrieval"}, # or: classification / clustering / text-matching

)

outs = llm.embed([{"prompt": "Which planet is known as the Red Planet?"}])

Or via CLI:

vllm serve jinaai/jina-embeddings-v5-omni-small \

--trust-remote-code \

--hf-overrides '{"task": "retrieval"}'

Alternatively set JINA_V5_TASK=retrieval in the environment. Output is bit-exact

with the corresponding pre-merged -retrieval / -classification / -clustering /

-text-matching variant.

Matryoshka (truncating embeddings)

All three backends support truncating the full embedding to a shorter dimension with L2 re-normalization, so the result stays unit-norm:

# transformers

vec = model.embed(truncate_dim=256, **proc(text="hello", return_tensors="pt"))

# or

vec = model.encode(["hello"], task="retrieval", truncate_dim=256)

# sentence-transformers

vec = model.encode("hello", truncate_dim=256)

# vLLM — ask the pooler for a smaller embedding

from vllm import PoolingParams

outs = llm.embed(prompts, pooling_params=PoolingParams(dimensions=256))

# or truncate + renormalize the full-dim output yourself:

import numpy as np

full = np.asarray(outs[0].outputs.embedding)

vec = full[:256] / np.linalg.norm(full[:256])

Batching

Pass a list to encode many inputs in one call.

# sentence-transformers — any modality

t_vecs = model.encode(["query 1", "query 2"])

i_vecs = model.encode([Image.open("a.jpg"), Image.open("b.jpg")])

v_vecs = model.encode(["clip1.mp4", "clip2.mp4"])

a_vecs = model.encode(["speech1.wav", "speech2.wav"])

# raw transformers — text (native padded batch)

inputs = proc(text=["query 1", "query 2"], padding=True, truncation=True, return_tensors="pt").to(model.device)

vecs = model.embed(**inputs) # (2, dim)

# vLLM — list of request dicts, any modality

outs = llm.embed([

{"prompt": "query 1"},

{"prompt": "query 2"},

])

For sentence-transformers, images / video / audio are forwarded per-sample (one forward pass each). Text is truly batched. For large-scale multimodal throughput, prefer vLLM.

Compatibility

Embeddings produced by this model share a vector space with:

jinaai/jina-embeddings-v5-text-small— text-onlyjinaai/jina-embeddings-v5-text-small(via matching adapter)

You can index text with the v5-text-small model and query it with image,

video, or audio embeddings from jina-embeddings-v5-omni-small — no reindexing.

License

CC BY-NC 4.0. For commercial use, contact us.

- Downloads last month

- 16,000